AI Detects Human Traffickers, Now Missing

Posted November 06, 2023

Chris Campbell

Brandon Wirtz is an enigma.

I like to think I know some pretty plugged in people. Few have heard of him.

In 2016, he built his own AI company called Recognant.

Before the mainstream pundits were talking about AI…

He was using it to uncover child sex trafficking… detect biased, untrustworthy news… give real-time market sentiment research… discover alternative cancer therapies… and more.

He even created an AI that could “dream.”

Here’s the thing…

Wirtz’s AI wasn’t like the AI we see in ChatGPT.

It was based on what he called “Mind Simulation,” which differs from the neural network-heavy ChatGPT.

“Neural networks,” he wrote in 2017, “are dumb black box systems.”

Sadly, Wirtz died in 2020 of colon cancer.

Most of his research and technology seems to be gone. Most mentions of Recognant are gone. All of his websites? Gone. Just a couple of obscure articles left and scattered social media posts.

(Why? That’s the biggest mystery. More in a moment.)

Here’s what this AI genius did in a span of five years.

Caught Human Traffickers on Craigslist

He trained his AI on all of Craigslist and asked it to find anomalies.

One day, it spit out an ad for a 1979 Toyota Prius.

That’s weird.

He did a little investigating…

Turns out, it was a guy selling a kid for sex trafficking.

Using this method, Wirtz would go on to catch thousands of sex traffickers.

He infiltrated their message boards. Typical ads looked like this:

It wasn’t always as obvious as a 1979 Prius.

For example, he found 3,000 ads disguised as people selling wine. AI is powerful, he realized, but it’s easily tricked. The future is a symbiosis.

“AI didn't pick them up because many of the things advertised could exist. A 16 year old wine is normal, country of origin can be almost anything. Variety of colors, code words for other aspects used wine terminology. We only started to find them as we linked accounts and phone numbers, and then found intersections.”

Created An AI That Could “Dream”

In 2017, Wirtz wrote that his AI, Loki, has a “Dream Mode.”

“Dreaming in AI does two things,” he wrote. “It removes clutter, and tests ideas.”

It allows it to test things like, “What would happen if X was a Y?” or “How far from X could Y be and still work?”

It also looked at redundant knowledge and discarded it if it figured out another, better way.

One example:

It would start “dreaming” about dinosaurs. It began with the logic that dinosaurs are dinosaurs. Tyrannosaurus is a dinosaur, Apatosaurus is a dinosaur, etc.

So, it created a heuristic: “Anything ending in osaurus is a dinosaur” -- resulting in one rule replacing 3,000 rules for each kind of dinosaur.

“This may not seem like ‘dreaming,’” said Wirtz, “but it is part of what you do at the end of the day.”

And another example:

It would generate various impossible scenarios starting from one recognized reason for impossibility and then seek out additional reasons.

If it was asked, “What if a cat was president?”

It would find the reasons for this to be impossible.

A.] The oldest cat was X and you have to be Y old to be president.

B.] The president must be a citizen, and cats aren’t citizens.

C.] And on.

“By creating long lists of why something couldn’t happen she can then pick an adjacent test and see if it can be proven the same way.”

For example:

Mice don't live long enough to be president, and while not all animals are barred for the same reasons, they can't hold office. Elephants may live long enough but aren't citizens, hinting at a specific group of animals, like elephants, that can't be president.

“In this state,” said Wirtz, “the AI is learning from itself. There is a classification of animals that live longer than most animals. Age is a differentiation between humans and most animals. This is much like our own ‘nonsensical dreams.’ They are based on a portion of the rules the real world lives by, but with a few modifications that make it not quite the same.”

Created a Fake News Detector

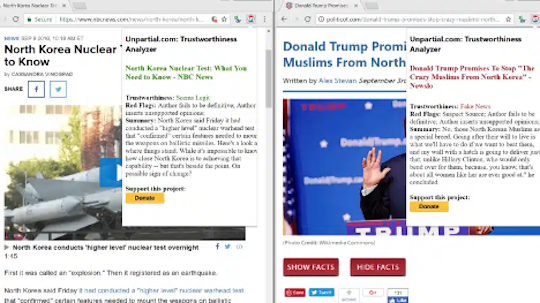

In 2018, Wirtz launched a Fake News Detector called Unpartial.

Unpartial was designed to be a “trustworthiness” detector. It didn’t check facts or validate sources. It used general rules to evaluate the “internal validity” of a story.

Before launching, they tested its accuracy by feeding it 10,000 articles that had already been rated by humans for trustworthiness.

Wirtz claimed it matched the human ratings 99% of the time.

Impossible? I have no idea.

On the left side, we have a narrative that's been stamped with a "Seems Legit" seal of approval by Unpartial, while on the flip side, there's an article flagged as lacking credibility, branded as "Fake News."

The scale of validity ranged from "Seems Legit" for the most reliable, through intermediary labels like "Consider a More Reputable Source" and "Seems Sketchy," down to "Super Shady" and finally "Fake News" for those deemed the least trustworthy.

Created a TLDR Summarizer

Long before ChatGPT, Wirtz created an application that could summarize any article, video, or long-form text.

Automated Cancer Research

He also, for obvious reasons, wanted to help with cancer research.

“I am also working on automating cancer research,” he wrote in 2018, “by extracting information relevant to a patient from all of the medical journals ever published. This has led to off-label uses of drugs to treat and slow cancers.”

The AI was also used to test the trustworthiness of alternative cancer therapies based on the available research.

Where is this now? No idea.

Trained His AI to Answer Existential Questions

Went Beyond Neural Nets

As said, ChatGPT relies primarily on neural networks, specifically deep learning models like transformers, to understand and generate human language.

This system learns from vast datasets. BUT its operation is less transparent due to the complexity of the neural networks.

In contrast, Mind Simulation, developed by Wirtz, used rule-based processes to emulate the human mind, aiming for transparency and a clear understanding of its decision-making processes.

Wirtz argued that Mind Simulation was a relatively new field -- about a decade old -- whereas neural networks have been in development for 50 years.

Though it took more time to perfect…

He seemed confident it was the superior choice.

This is a contentious subject, but an important one given that AI is moving into more high-stakes domains like healthcare, finance, self-driving cars, etc.

Transparency in decision-making will be key.

He Was a Critic of Neural Networks

Here were his criticisms of neural networks:

A.] Neural networks are simpler than many presume, equating their construction to converting basic code.

B.] Adding layers to create "deep" neural networks is trivial, essentially looping existing code without significant innovation.

C.] Neural networks trained on GPUs may not perform the same on smartphones due to variations in random number generation, affecting results. (I think ChatGPT proved this one wrong.)

D.] Neural networks can overfit data and draw spurious correlations, like attributing personal traits to physical appearances in photos.

There are more, but these are the main ones.

In short, Brandon Wirtz was a genius. He was a testament to the power of AI in the right hands.

It seems a little weird that all of his research is gone.

Seems more people should know his name.

Tomorrow, we'll dive deeper into these insights... and see if they hold any AI investment clues.