Anthropic ✓. OpenAI ✗. Pentagon ?.

Posted March 03, 2026

Chris Campbell

Last July, Anthropic—the company behind the AI model Claude—signed a $200 million deal with the Pentagon.

Claude became the first AI cleared for classified military networks.

Then things got interesting.

The Pentagon wanted Claude available for, and I quote, "all lawful purposes."

No restrictions. No carve-outs. Carte blanche.

Anthropic said no on two points.

- No mass domestic surveillance.

- No fully autonomous lethal weapons.

The Pentagon said: “Those things are already illegal, so why do we need to write it into a contract with a private company?”

Anthropic’s reply: “all lawful purposes” is a much bigger tent than it sounds.

Current law allows government agencies to purchase enormous amounts of personal data from commercial brokers without a warrant.

That’s technically lawful.

It’s also, if you run it through a sufficiently powerful AI model, functionally indistinguishable from mass surveillance.

In short, Anthropic was saying the law has a gap. They didn’t want their tools sitting in that gap.

The Pentagon walked.

Then…

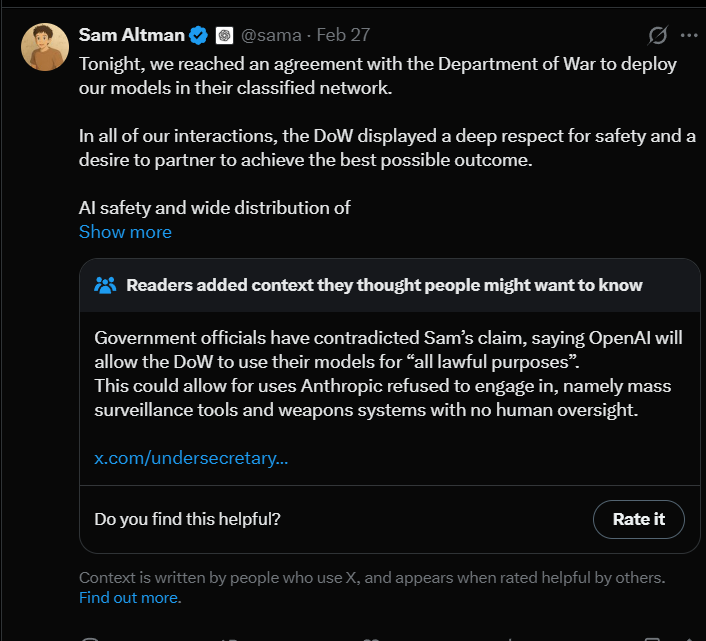

OpenAI Stepped In

On February 27th, Trump ordered every federal agency to dump Anthropic products within six months.

On X, Defense Secretary Hegseth slapped a "supply chain risk" label on the company—a designation normally reserved for Huawei and other Chinese hardware firms.

Sam Altman smelled blood in the water and started swimming.

Hours later—hours—OpenAI announced it would replace Anthropic on the Pentagon's classified network.

Even without the Iran strikes happening the next day, the timing was comically bad.

Altman admitted on X that the OpenAI deal "looked opportunistic and sloppy." Said he "shouldn't have rushed."

He acknowledged that the optics of agreeing to the Pentagon deal hours after the Trump administration labeled Anthropic a "supply-chain risk" didn't "look great."

OpenAI claims to have amended its Pentagon contract to add stronger surveillance protections—closing a loophole that would've let the military use AI on commercially purchased data.

Your geolocation. Your browsing history. Your financial records. All the stuff data brokers sell to anyone with a credit card.

So the final outcome? Roughly where Anthropic started.

Anthropic Wins the Battle (For Now)

In reality, the Pentagon probably did Anthropic a favor.

Every frontier AI lab—Anthropic, OpenAI, Google—is GPU-constrained right now. Data-center GPUs are effectively sold out for months, with lead times stretching between 36 and 52 weeks.

So when the Pentagon "punishes" Anthropic by pulling a $200M contract, what actually happens?

The GPUs that were running classified workloads will likely get redirected to enterprise customers who pay market rates, don't need classified infrastructure, and don't come with the reputational baggage.

So far, the net financial hit isn’t even a rounding error.

It’s positive.

The Claude app got flooded with so many downloads it hit #1 on the App Store for the first time. At the same time, ChatGPT uninstalls spiked 295% over the weekend.

Meanwhile, Altman has been in perpetual damage control.

In truth, losing that contract will likely prove to be net-positive.

One Tremendous Caveat

The public debate isn’t about one contract. It’s about a question that's going to define the next decade:

Who decides what AI is allowed to do?

Right now, the companies building these tools set the limits.

And that’s no reason to get comfortable.

The next version of this battle won't involve a sympathetic company drawing a bright line. It'll be murkier. The government will be subtler. And the public will likely not be paying attention.

The limits on what your AI tools can and cannot do are not fixed. They're negotiated. They're political. And they are always up for grabs.

The only real option is to use AI to tilt the game in your favor… on your own terms.

As AI transforms investing, this has never been more important.