The Great AI Chicken Shortage

Posted April 29, 2024

Chris Campbell

Archimedes once wrote:

“Give me a lever long enough and a fulcrum on which to place it, and I shall move the world. ”

Technology is that great lever and fulcrum. It magnifies the impact of any system it is applied to.

That can be great. It can also be terrible.

When the underlying system is sound, technology can lead to remarkable advancements and improvements. However, when the system is plagued with flaws, technology can exacerbate those problems, leading to unintended and sometimes disastrous consequences.

For example, AI-driven trading systems based on incomplete or inaccurate data can amplify market volatility, triggering flash crashes.

We’ve seen it before.

Consider the Flash Crash of 2010, where algorithmic trading systems triggered a sudden and dramatic decline in the U.S. stock market. Or Knight Capital's $440 million loss in 2012 due to a glitch in its AI-powered trading system.

Even more dramatic, consider the Swiss Franc flash crash in 2015, where algorithmic systems amplified the market chaos following an unexpected policy change.

There are also negative consequences from an overreliance of these quantitative technological fixes.

The Great AI Chicken Shortage

In 2018, KFC faced a major supply chain disruption in the UK after switching to a new delivery partner, DHL, which used an AI-powered system to manage logistics. The system's inefficiencies led to a chicken shortage, forcing the temporary closure of most of KFC's 900 UK outlets.

In 2019, the Boeing 737 MAX aircraft faced a global grounding after two fatal crashes were linked to a faulty AI-powered flight control system called MCAS.

The system, designed to prevent stalls, relied on data from a single angle-of-attack sensor, which, if malfunctioning, could cause the MCAS to repeatedly push the plane's nose down. This flaw in the AI system's design led to tragic accidents, damaging Boeing's reputation and resulting in significant financial losses.

Then, in 2021, Zillow, an online real estate marketplace, shut down its AI-powered home buying and selling business after incurring substantial losses.

Zillow’s algorithms, designed to predict home prices and guide buying decisions, struggled to accurately forecast prices in a volatile housing market. This led to Zillow overpaying for properties, ultimately forcing the company to sell off its inventory at a loss and lay off a quarter of its workforce.

But one sector in particular is taking heat at the moment: science.

The rise of powerful AI language models like ChatGPT has exposed the cracks in the foundation of the scientific establishment, revealing troubling practices and perverse incentives that have long plagued the world of research and publishing.

While AI has the potential to revolutionize science for the better -- and accelerate groundbreaking discoveries -- it’s going to expose all of its flaws first.

Publish or Perish

A recent analysis of over 5 million studies published in 2023 found a sudden, significant increase in the usage of certain words often employed by AI, such as "meticulously", "intricate" and "commendable."

In some cases, scientists appear to have copied and pasted AI-generated text directly into their papers without verification.

A famous example is when an Israeli research team submitted a paper that included an apology from an AI language model for not having access to real-time data.

The original paper reads:

“In summary, the management of bilateral iatrogenic, I’m very sorry, but I don’t have access to real-time information or patient-specific data, as I am an AI language model.”

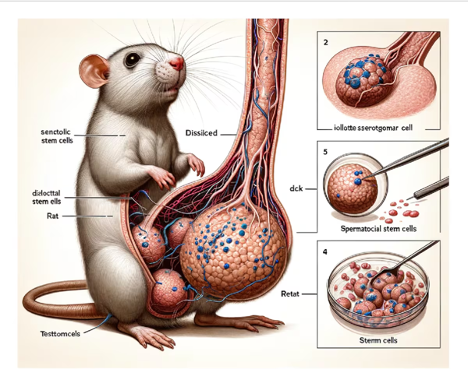

And who hasn’t seen the bizarre image of mouse testicles, published in a study on sperm precursor cells.

Some researchers estimate that up to 60,000 scientific studies published in 2023 were written with the help of ChatGPT or similar AI tools.

Sure, most researchers use AI for tasks like spell-checking or translating. But there's a growing concern about a "gray area" where scientists might be relying too heavily on AI without verifying the results.

But the real problem isn’t the use of AI.

AI is exposing the flawed incentive structures and pressures of academia.

The "publish or perish" mindset compels researchers to prioritize being first over all else, for fear of getting "scooped" and missing out on prestige, funding and career advancement.

This has created an environment of secrecy and siloed research rather than open data sharing and collaboration.

As a result, it’s estimated that scientists spend up to 60% of their time chasing grants rather than focusing on their actual work. Rather than making this process more efficient, AI is causing a deluge of substandard research, intensifying the political dynamics of scientific research at the expense of the actual research.

Thankfully, there’s a solution -- but it requires building a new architecture for scientific research from the ground up.

My proposal:

The combination of AI and blockchain technology is poised to flip this broken system on its head.

As just one example: Crypto’s open, immutable public ledgers allow scientists to establish clear provenance of scientific discoveries. No more hiding research in the shadows in fear of it being stolen.

Again, that’s just one way this intersection could transform science.

More on that -- and the rise of Desci + AI -- tomorrow.